AI-First Data, Part 1: Product Event Tracking

The Context Problem

AI is accelerating software engineering at an absurd pace. Engineers at top companies report that most of their code is now written and reviewed by AI. Meanwhile, the data science craft is evolving more slowly. This is a context problem, and data science can catch up if we solve it.

Engineering context is already in the format LLMs consume best. Code lives in repositories. It's text, structured, version-controlled. Features are designed and scoped before work begins. Tests provide tight feedback loops. An LLM can read the full surface area of a codebase, propose a change, and get concrete feedback in seconds.

Data science context lives across a completely different surface. It spans human interaction: meetings, Slack threads, strategy papers, stakeholder presentations. It spans code: queries, notebooks, data dictionaries, pipeline configs. And it spans tribal knowledge, the unwritten rules that determine whether your analysis is correct or subtly, catastrophically wrong. total_revenue in table_1 is not the same as total_revenue in table_2, and there is almost no way to discover why, or which one gets reported to senior leadership, without talking to the person who created both six years ago.

Part of this is inherent to the domain. "Why is acquisition slowing down?" has no spec, no test suite, no defined endpoint. But even clearly scoped questions like "how many users completed onboarding last week?" require scattered context about what "completed onboarding" actually means in your data model. The question is simple, but the mapping between that question and the correct data is not, and it often lives in places an LLM cannot reach.

We know how to fix the reachable part of this problem. And fixing it starts a flywheel.

The Semantic Layer: An Old Idea with New Potential

The concept of a semantic layer, a structured mapping between business language and underlying data, has been around for decades. BI tools in the 1980s and 90s introduced abstraction layers that mapped raw tables to human-friendly concepts. The modern data stack reframed it as a metrics layer: dimensions, measures, joins, standardized definitions. The linked data movement in the 2000s pushed similar ideas about making meaning machine-readable. The AI-first data architecture framing is just the latest iteration.

These layers worked in theory. In practice, maintaining them required centralized teams working on it full-time. Any change in the product, the tech stack, or team priorities could break whatever mapping once existed. The layers sometimes decayed faster than they could be updated, and some organizations eventually stopped trying.

Engineering never had this problem at the same scale because its context tracking was (mostly) built in. Repositories, type systems, test suites, and version control are all semantic artifacts. They encode what things mean, how they relate, and when they changed. Engineers didn't set out to build a semantic layer. They got one as a byproduct of how software is developed.

Analytics did not get that byproduct. Meaning was not annotated or version-controlled. That's the gap AI is now exposing.

LLMs can already turn natural language into SQL or Python. What they cannot do is guess which of two total_revenue columns is the one the CFO uses, or infer that a clickstream event name was reused after the interface completely changed. They cannot recover context that was never recorded. But if the mapping between language and data is explicit and versioned, it unlocks two things:

- AI becomes dramatically more capable today, grounding analysis in real definitions instead of guessing.

- AI can help maintain that mapping tomorrow, detecting drift, flagging inconsistencies, proposing updates. The maintenance cost that historically killed semantic layers collapses. Structure becomes a compounding asset instead of a depreciating one.

Structure Compounds

Think about what happens when an engineer defines the expected shape of their data in code. Every other piece of the system that touches that data now gets automatic validation. The next person who extends the system inherits those guardrails for free. Add a test, and changes become safer. Add a formal agreement between components, and the whole system becomes more resilient. Each piece of structure makes the next piece cheaper to add and harder to break. AI accelerates this because it reads those definitions, tests, and agreements and uses them as constraints to produce correct code faster.

Analytics rarely compounded in the same way because the semantic mapping was implicit. New analysis required rediscovering context. Product changes risked silent metric drift. The value of a senior analyst was in their institutional memory as much as their technical skill.

Start encoding that context in durable, versioned artifacts and the dynamic changes. An AI agent can detect unregistered events in pull requests, flag mismatches between documentation and implementation, search version history to explain when and why something changed. The semantic layer used to decay because humans maintained it manually. With enough structure in place, AI takes over much of that work. The constraint shifts from ongoing governance to initial explicitness.

This ripples well beyond simple data requests. The same context fragmentation that makes "how many users clicked 'next' on the homepage?" hard to answer correctly also gates ML modeling, causal inference, A/B testing, and experiment design. If you can't trust event definitions, you can't trust the features going into your model. If you can't trace a metric's lineage, you can't design a clean experiment. The semantic layer is foundational infrastructure for the entire data science stack, not just for dashboards.

My Proof of Concept: Agentic Event Tracking

I built this on my personal blog to test whether the idea holds at the smallest possible scale. It's limited in scope, but it works end to end. The system covers three loops:

- Enforcing instrumentation during development

- Collecting data without dropping anything

- Answering natural language questions grounded in real definitions

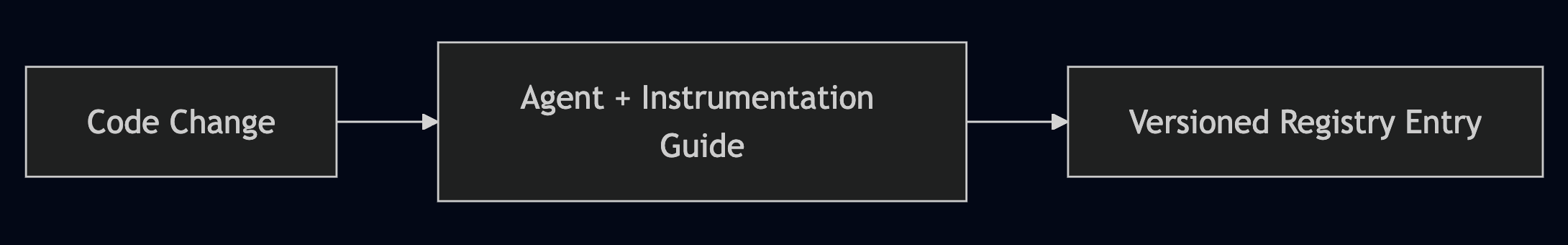

The write path: an AI coding agent reads a markdown instrumentation guide stored in the repo. The guide defines naming conventions, versioning rules, and a pre-merge checklist. When I build a new component, the agent walks through the workflow: create a versioned implementation ID, add the registry entry with metadata, wire up the trackEvent() call. Every event is logged to the database regardless of whether its ID exists in the registry yet. Gap detection happens after the fact. You can always add strictness later. You can't recover data you never collected to begin with.

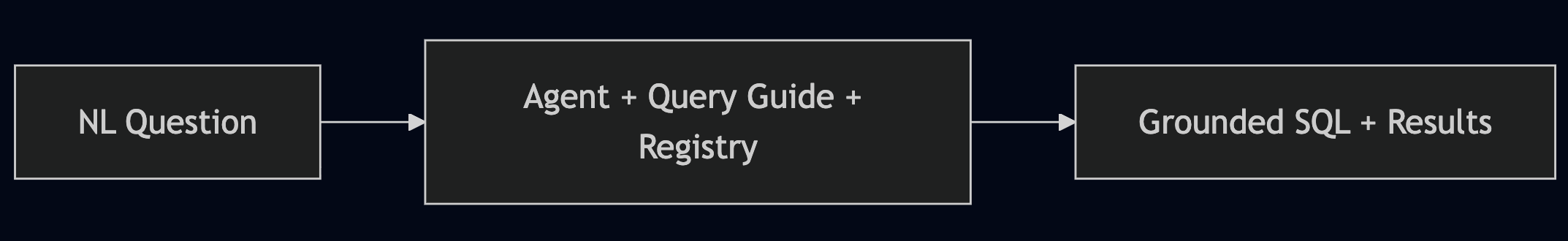

The read path: I ask a question in plain English. The agent reads a query guide, searches the registry for matching implementation IDs, generates grounded SQL, executes it via an MCP bridge, and returns validated results. All without leaving the editor. The implementation may not look the same everywhere, but the pattern should scale.

The core of the system is a versioned event registry, a JSON file in git where every trackable interaction has a unique implementation ID with metadata about what the user experienced, where the code lives, which commit introduced it, and when it was valid.

{ "event_implementation_id": "evt_impl_checkout_continue_btn_v2", "description": "Checkout continue button (redesigned, moved to top)", "file_path": "components/CheckoutButton.tsx", "commit_sha": "abc123...", "page": "/checkout", "introduced_at": "2026-03-15T00:00:00Z", "tags": ["click", "checkout", "conversion"] }

When the UI changes, a new versioned ID is created. The old one is deprecated but never deleted. Historical events stay interpretable. When someone asks "did we change the checkout button in March?", you query the registry instead of asking around.

About 500 lines of code, two markdown files, a JSON registry, and three lines of config. No orchestration framework. No additional infrastructure. The hard part was codifying the context, not building pipelines, and I expect this to only get easier as models and agent orchestrators improve.

Record First, Normalize Later

This is a transitionary step. I'm not claiming this is the optimal data architecture, or that I've figured out the right conventions, or that humans will even be designing these layers in the future.

Very simply, you can't retrieve information you never recorded, but you can always reformat later. AI is unusually good at reconciling semi-structured information. It is terrible at reconstructing institutional memory that was never written down. The cost of not starting is permanent. Unrecorded context, tribal knowledge that walks out the door when someone leaves. The cost of starting imperfectly is just refactoring, and the tools are already good enough to make that cheap.

Start recording. Enforce some event tracking. Codify what you know about your data in a structured, machine-readable way. The specific format matters far less than the act of recording the context at all.

The organizations that unlock 10x analytics won't have the best model. They'll have made their meaning explicit.

Event tracking is the first bridge. The pattern generalizes to data dictionaries, metric definitions, experiment configurations, anything where tribal knowledge is the bottleneck.

The idea is not new but the tooling and AI capabilities are. Encode meaning, let structure compound.